AWS AgentCore: The Architecture Guide I Wish I Had

Written by Herman Lintvelt

Originally posted on Substack

I watched the first token appear, then a tool invocation flashed across the UI: “Looking up your profile...” The same query a week earlier had shown a blank screen for several seconds before any response. Same tools. Same model. Same system prompt. What changed was that I could finally see my agent work.

A few months ago, I was building an AI tax assistant on AWS. The agent had 11 tools, an SSH-tunneled connection to a MySQL database, a knowledge base for document retrieval, and a calculator API for tax computations. It worked. But the orchestration was opaque: no streaming of tool calls, no visibility into intermediate reasoning, and a noticeable silence between the user’s question and the first chunk of response.

Then I moved the same agent to AWS Bedrock AgentCore. Same tools, same model, same system prompt. But instead of handing my tools to a managed loop, I wrote a Python file (using the Strands SDK). A @tool decorator here, a stream_async() call there, a Dockerfile, and a deploy command.

Time-to-first-token dropped by roughly half -- a meaningful but not dramatic improvement. The qualitative shift was larger. Tool calls became visible in the stream. The system prompt was no longer constrained to 4,000 characters. I could set a breakpoint in my tool code and debug it. The agent felt responsive even on longer queries because I could show the user what was happening.

The shift felt disproportionate to the effort. I did not rewrite my agent from scratch. I did not learn a new framework. I wrote a Python file, structured it around a few patterns that turned out to matter a great deal, and deployed it. The hard parts were not where I expected them to be.

This is the article I wish I had when I started. Not the AWS docs (which are thorough but scattered), not a quickstart tutorial (which stops at “hello world”), but the architecture patterns and hard-won lessons from shipping a real agent to production on AgentCore. If you are building agentic AI backends and want to understand what AgentCore gives you, what it demands from you, and where you will lose hours if nobody warns you -- this is that guide.

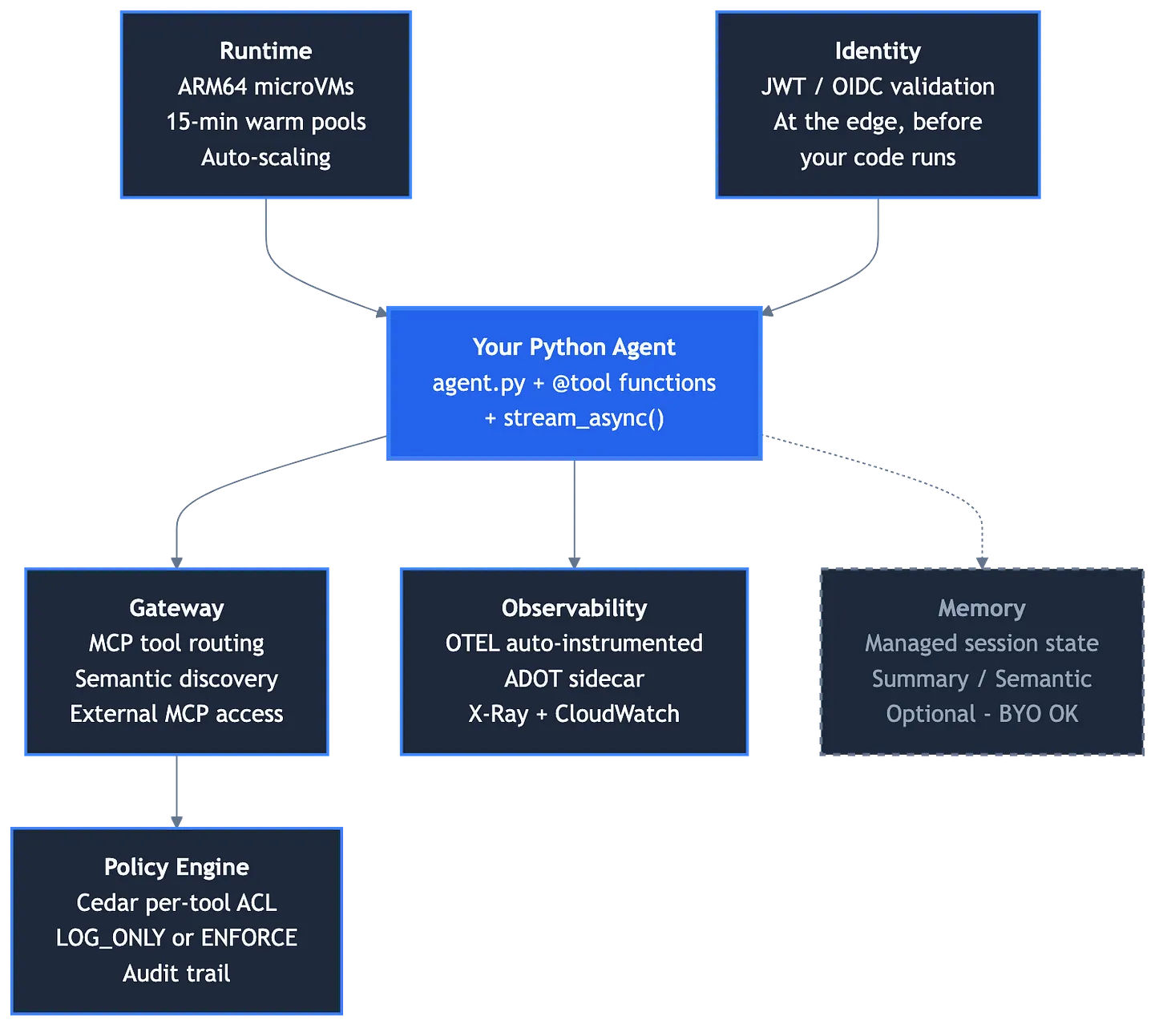

AgentCore is six managed services that turn your Python agent into a production system.

That is the one-sentence version. Here is what each service actually does:

Runtime is the compute layer. Your agent runs in ARM64 microVM containers with 15-minute warm pools. You provide a Dockerfile and a Python entrypoint. AgentCore handles auto-scaling, health checks, container lifecycle, and networking. There are no cold starts for active traffic -- warm pools keep containers ready. When traffic drops, containers scale to zero. When it returns, a warm container picks up the request.

Identity validates JWTs at the runtime layer before your code executes. It uses OIDC discovery to verify tokens, and the validated claims are available in your request context. Authentication happens before your agent code runs.

Gateway is an MCP-protocol tool gateway. Tools deployed behind the Gateway are accessible to any MCP-compatible client -- your agent, Claude Desktop, Cursor, ChatGPT. The Gateway routes tool calls to Lambda or OpenAPI targets, adding observability spans to each invocation.

Policy Engine uses Cedar, Amazon’s policy language, for declarative per-tool access control. You write policies like permit(principal, action, resource is AgentCore::Gateway) and apply them in LOG_ONLY mode to observe access patterns before enforcing.

Observability auto-instruments your agent with OpenTelemetry via an ADOT sidecar. Traces flow to X-Ray and CloudWatch, grouped by session and user. Model inference latency, tool execution time, and gateway round-trips surface automatically.

Memory offers managed session memory with summary, preference, and semantic strategies. It is optional -- I kept DynamoDB for conversation persistence because it was already working, and switching would have introduced risk for no immediate benefit.

The key insight: your agent.py is the center of this system. Everything else wraps around it. You do not configure AgentCore to run your agent. You write your agent, and AgentCore provides the production infrastructure.

This is a different mental model from most managed AI services. With Bedrock Agents, SageMaker, or similar platforms, you configure the platform and it runs your model. With AgentCore, you write a program and the platform runs it. The distinction is subtle but it changes everything about how you debug, iterate, and operate the system. When something goes wrong, you open your Python file and read the code. Not a configuration dashboard. Not a CloudFormation template. Your code.

The Entrypoint Pattern

The architecture of an AgentCore agent splits cleanly into two layers: module-level resources that stay warm across requests, and per-request resources that carry session identity.

Module-level resources are expensive to create and safe to reuse. The Bedrock model client, the system prompt loaded from a markdown file, and the list of tool functions. These initialize once when the container starts and persist for the microVM’s 15-minute warm pool lifetime.

Per-request resources must be unique to each invocation: the Agent instance (which carries trace_attributes for OTEL session grouping), the MCP client (which carries the user’s JWT), and the session context injected into the prompt.

The entrypoint is an async generator that yields SSE-formatted strings:

from strands import Agent

from strands.models import BedrockModel

from bedrock_agentcore.runtime import BedrockAgentCoreApp

app = BedrockAgentCoreApp()

# Module-level: warm across requests

model = BedrockModel(model_id="us.anthropic.claude-sonnet-4-20250514-v1:0",

cache_prompt="default")

SYSTEM_PROMPT = Path("prompts/system.md").read_text()

@app.entrypoint

async def handle_invocation(payload, context):

# Per-request: carries session identity

agent = Agent(

model=model, system_prompt=SYSTEM_PROMPT, tools=ALL_TOOLS,

trace_attributes={"session.id": session_id, "user.id": user_id},

)

async for event in agent.stream_async(user_query):

if "data" in event:

yield f"event: chunk\ndata: {json.dumps({'content': str(event['data'])})}\n\n"

The stream_async() method yields every event the model produces: text generation, tool selection, tool results, and completion signals. You decide what to forward to the frontend. My agent uses four SSE event types:

chunk-- text tokens as they generate (the content the user sees streaming in)trace-- tool invocations with the tool name (the frontend shows “Calling get_profile...”)done-- session metadata and processing time (for analytics and conversation persistence)error-- structured failure messages

This contract is simple enough to implement in any frontend framework and rich enough to build a responsive UI. The user sees text streaming in real time, knows when the agent is calling a tool and which one, and gets timing data for every interaction.

Two small details in the entrypoint have outsized production impact.

cache_prompt="default" enables Anthropic’s prompt caching with a 5-minute TTL. The system prompt tokens are cached across requests on the same microVM. In my case, a 3,000-token system prompt that previously cost full input pricing on every invocation now costs a fraction after the first request. Roughly 90% cost reduction on system prompt tokens for active users.

trace_attributes injects session and user identifiers into every OTEL span the agent generates. Without this, traces exist but float anonymously in X-Ray. With it, the AgentCore Observability dashboard groups traces into sessions, and you can follow a single user’s journey through tool calls, model inference, and response streaming. This is the line that makes observability useful rather than just present.

Tools Are Just Python Functions

This is the part of AgentCore that made me rethink how I build agent tools.

A Strands tool is a Python function with a @tool decorator. The docstring becomes the description the LLM sees when deciding whether to call it. The type hints become the JSON schema for parameter validation. The return value is a JSON-encoded string.

from strands import tool

@tool

def get_profile(user_id: str, return_id: str = "") -> str:

"""Get user profile including personal details and latest tax return data.

Use when the user asks about their profile or tax situation.

Args:

user_id: The TaxTim user ID.

return_id: Optional return ID. If empty, uses the latest.

"""

with get_pool() as conn:

with conn.cursor() as cur:

cur.execute("SELECT * FROM user WHERE id = %s", (user_id,))

row = cur.fetchone()

return json.dumps({"success": True, "profile": format_profile(row)})

No OpenAPI spec. No serialization layer. No separate schema file. The tool definition and the tool implementation are in the same place. When I need to change what a tool does or how the model understands it, I edit one function.

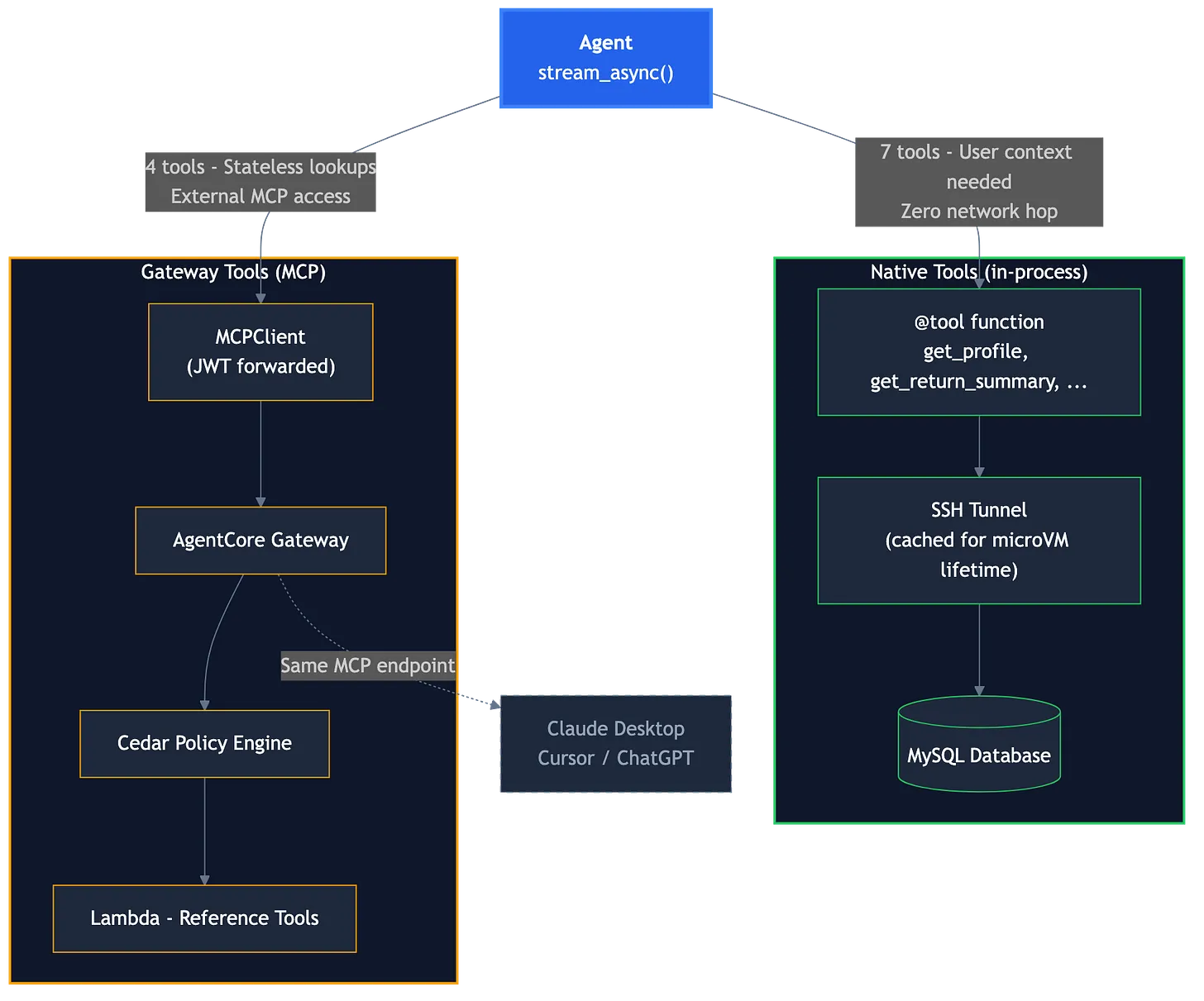

My production agent has 11 tools split across two architectures, and the split criterion matters more than I initially expected.

Seven native tools run in-process inside the agent container. These are the tools that need user-specific context: profile lookup, tax data, help section answers, workflow help, tax correspondence. They query a MySQL database through an SSH tunnel that initializes on the first call and persists for the microVM’s lifetime. Zero network hops for tool execution. Access to the full request context.

Four gateway tools run behind the AgentCore Gateway as a Lambda function. These are stateless: tax table lookup, source code search, knowledge base retrieval, and tax calculation. They do not need user context -- they take parameters and return reference data.

The gateway tools get three benefits that native tools do not. Cedar policy enforcement gives you an audit trail of every call. Per-tool observability generates separate spans for each tool invocation. And because the Gateway speaks MCP, the same tools are accessible from Claude Desktop, Cursor, or any other MCP client.

I started with all tools native. Once the agent worked end-to-end, I extracted the stateless ones to Gateway. This is the approach I would recommend: get your agent working first, then split tools strategically. The extraction is low-risk because gateway tools have the same interface as native tools from the model’s perspective. The agent does not know or care whether search_knowledge_base runs in-process or behind an MCP gateway. It calls the tool, gets a result, and continues.

One operational detail worth noting: gateway tools appear in the agent’s tool list with a namespace prefix (e.g., reference-tools___search_knowledge_base). If your frontend displays tool names from trace events, you will want to strip the prefix or the user sees an ugly internal identifier instead of a clean tool name.

The composition happens at request time:

# Gateway tools discovered per-request (JWT forwarded for auth)

mcp_client = MCPClient(

lambda: streamablehttp_client(GATEWAY_MCP_URL, headers={"Authorization": auth_header})

)

with mcp_client:

gateway_tools = mcp_client.list_tools_sync()

# Both sets passed to the per-request Agent

agent = Agent(model=model, system_prompt=PROMPT,

tools=ALL_TOOLS + gateway_tools) # 7 native + 4 gateway

The MCPClient is created per-request because it forwards the user’s JWT to the Gateway. When no Bearer token is available (during development with test auth), the agent falls back to native tools only. This graceful degradation keeps the development loop fast.

Identity, Policies, and the Auth Stack

AgentCore Identity validates JWTs at the runtime layer using an OIDC discovery URL. When it works, it is elegant: the token is verified before your code runs, validated claims are available in the request context, and you never write token verification logic.

The catch: Identity requires a full OIDC discovery endpoint. Your auth backend must serve a /.well-known/openid-configuration document that returns issuer metadata and points to a JWKS endpoint. If you use Cognito, Auth0, or Okta, this works out of the box. If your auth system is a custom JWT issuer, you may not have this.

The OIDC requirement caught me off guard. Our auth backend issued valid JWTs but had no discovery endpoint. The options were:

- Add OIDC discovery to the auth backend. Two endpoints: the openid-configuration document (static JSON) and the JWKS endpoint it references. This is the clean solution.

- Build a shim. A CloudFront function or static S3 file that serves the discovery document pointing to the existing JWKS endpoint.

- Skip Identity and validate in your agent code. Decode the JWT yourself, extract claims manually. You lose runtime-level validation but avoid the OIDC requirement entirely.

We went with option 1, and the backend team added the endpoints. Once the discovery URL resolved correctly, the agentcore.json configuration was straightforward:

"authorizerType": "CUSTOM_JWT",

"authorizerConfiguration": {

"customJwtAuthorizer": {

"discoveryUrl": "https://auth.example.com/.well-known/openid-configuration",

"customClaims": [{

"inboundTokenClaimName": "roles",

"inboundTokenClaimValueType": "STRING_ARRAY",

"authorizingClaimMatchValue": {

"claimMatchOperator": "CONTAINS",

"claimMatchValue": { "matchValueString": "ROLE_USER" }

}

}]

}

}

The customClaims block rejects tokens that lack the ROLE_USER role before your code executes. This is infrastructure-level access control, separate from the Cedar per-tool policies in the Gateway.

One detail that tripped me up: the requestHeaderAllowlist controls which HTTP headers reach your agent code. Only whitelisted headers pass through. If you need the Authorization header to forward JWTs to Gateway tools, it must be explicitly listed. I spent time debugging “empty auth header” errors before discovering this.

Cedar policies operate at the tool level. Start with LOG_ONLY mode to observe which tools are called and by whom:

permit(principal, action, resource is AgentCore::Gateway);

That single line permits all authenticated callers to invoke any gateway tool. It is the right starting point. Tighten per-tool when you understand your access patterns.

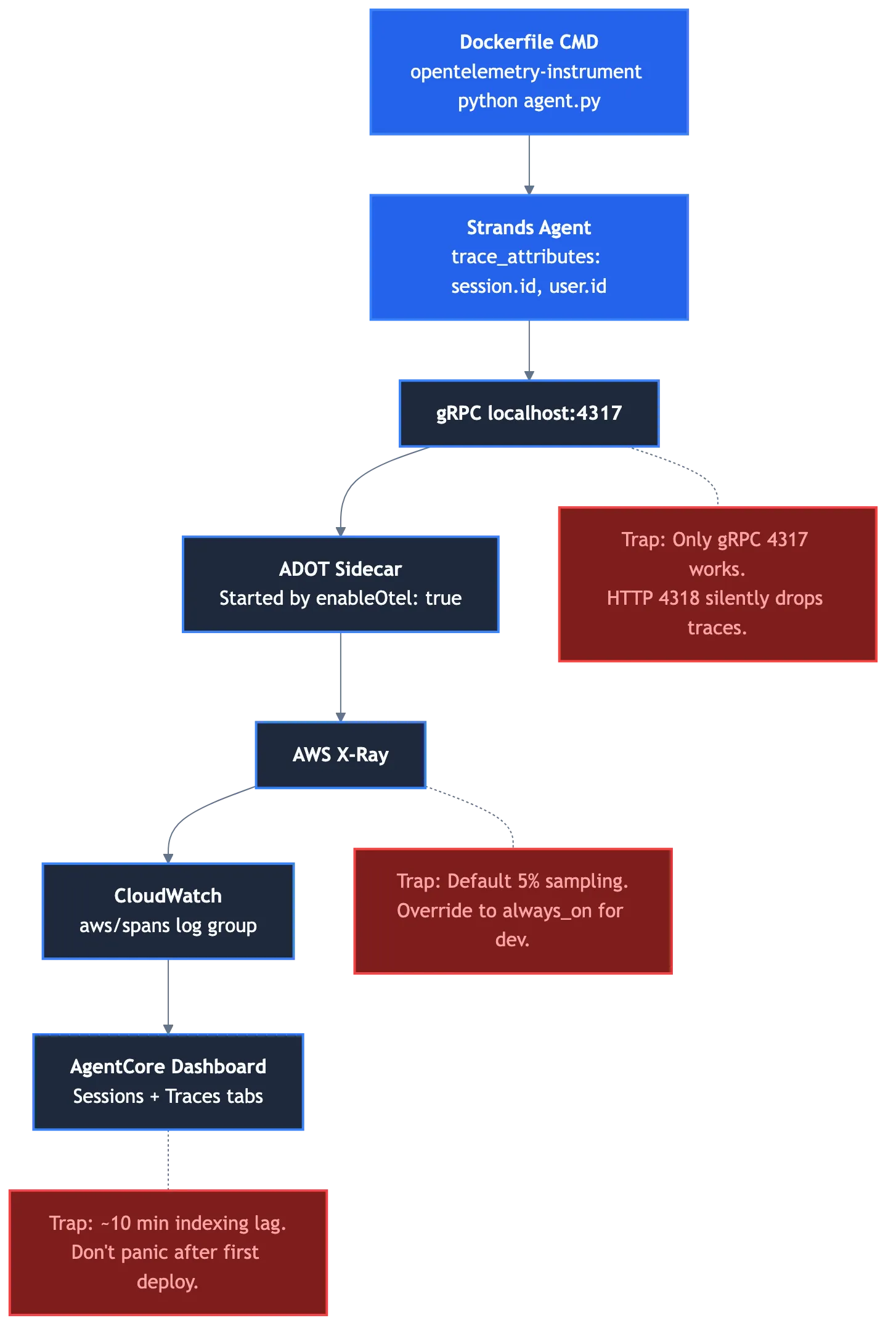

Observability That Actually Works (After Three Traps)

The observability setup is the part of AgentCore that gave me the most trouble and, once working, the most value.

Three things must be true simultaneously. Miss any one and you get no traces.

First, enableOtel: true in agentcore.json. This starts the ADOT sidecar alongside your container.

Second, opentelemetry-instrument as the CMD prefix in your Dockerfile:

CMD ["opentelemetry-instrument", "python", "agent.py"]

Third, trace_attributes on the Agent constructor for session grouping (shown in the entrypoint pattern above).

Three environment variables configure the OTEL distro:

{"name": "OTEL_PYTHON_DISTRO", "value": "aws_distro"},

{"name": "OTEL_PYTHON_CONFIGURATOR", "value": "aws_configurator"},

{"name": "OTEL_TRACES_SAMPLER", "value": "always_on"}

Now the traps.

Trap 1: gRPC port 4317 only. The ADOT sidecar listens on gRPC port 4317. Do not set OTEL_EXPORTER_OTLP_ENDPOINT to HTTP port 4318. It will silently drop every trace. Nothing in the logs tells you this is happening. I discovered it by running netstat inside the container.

Trap 2: Default 5% X-Ray sampling. I deployed, invoked the agent, checked the dashboard, saw nothing. Concluded observability was broken. It was not -- X-Ray was sampling 5% of traces, and my development traffic was below the threshold. Set OTEL_TRACES_SAMPLER to always_on for development. Switch to a lower rate for production.

Trap 3: Dashboard indexing lag. The AgentCore Observability dashboard has approximately 10 minutes of indexing lag. After deploying a new agent version, the first traces will not appear for roughly 10 minutes. I verified this by checking the aws/spans CloudWatch log group directly -- the spans were arriving, but the dashboard had not indexed them yet.

I lost four hours to the sampling rate trap alone. The fix was one line of configuration.

When everything works, the value is immediate. Per-request traces show Bedrock model inference latency, tool execution time, gateway round-trips, DynamoDB operations, and everything grouped by session and user. The model call is the largest span. Tool calls nest inside it. Gateway round-trips appear as child spans. The trace tells the complete story of each request -- what the agent decided, how long each step took, and where time was spent.

Two Commands and a Dockerfile

AgentCore has a dual-track deployment model. This is the part that initially confused me, but it makes sense once you understand what each track is responsible for.

CDK defines the shape of your deployment. This is where I was pleasantly surprised: the @aws/agentcore-cdk package exposes L3 constructs (AgentCoreApplication, AgentCoreMcp) that let you declare the AgentCore Runtime, Identity authorizer, Gateway, Cedar policies, and memory configuration right alongside your IAM roles, DynamoDB tables, and Lambda functions. AgentCore resources are CloudFormation resources under the hood. You can manage them the same way you manage the rest of your AWS infrastructure.

The agentcore CLI handles the container lifecycle: building the ARM64 image, pushing it to ECR, and rolling the new image out to the Runtime. This is the code-push counterpart to CDK’s infrastructure-as-code. CDK says “here is the shape of my runtime”; agentcore deploy says “here is the new container image to run in it.”

Two commands, always both, always in order:

# Infrastructure shape: IAM, DynamoDB, API Gateway, AgentCore Runtime + Gateway (via @aws/agentcore-cdk)

AWS_PROFILE=myprofile npx cdk deploy MyAgentStack --exclusively

# Container build + push + rollout

agentcore deploy -y

The --exclusively flag prevents CDK from redeploying unrelated stacks. The -y flag skips the interactive confirmation prompt on the AgentCore CLI.

The Dockerfile is the contract between your code and AgentCore Runtime:

FROM --platform=linux/arm64 public.ecr.aws/docker/library/python:3.11-slim-bookworm

WORKDIR /app

RUN apt-get update && apt-get install -y --no-install-recommends \

gcc libmariadb-dev && rm -rf /var/lib/apt/lists/*

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY . .

EXPOSE 8080

CMD ["opentelemetry-instrument", "python", "agent.py"]

That is the full Dockerfile. ARM64 base image, install dependencies, copy code, expose port 8080, run with OTEL instrumentation. There is nothing hidden.

If you use the CLI-driven path instead of the CDK constructs, agentcore.json is your source of truth for runtime configuration, environment variables, gateway targets, Cedar policies, and memory settings. In practice I use both: CDK for the AgentCore resources that need to live next to IAM policies and DynamoDB grants, agentcore deploy for the container push.

In a CI/CD pipeline, this becomes two sequential steps using the same AWS credentials: first cdk deployto ensure the infrastructure shape is current, then agentcore deploy to push the agent container. Once your infrastructure is stable, agentcore deploy is the step you run most often -- agent code changes do not require a full CDK cycle.

A practical note on iteration speed: during development, I skip CDK entirely and just run agentcore deploy -y. The container builds in about 90 seconds and deploys in another 60. From code change to live agent in under three minutes. That feedback loop makes a real difference when you are iterating on tool behavior or prompt engineering.

Three Patterns That Surprised Me

These are the non-obvious lessons from production that are not in the documentation.

- Per-request Agent, module-level everything else.

I initially created the Agent at module level, thinking it would be more efficient. It broke observability. The trace_attributes are set at Agent creation time, and every request needs its own session ID and user ID in the traces. Module-level Agent means every trace carries the same (or no) session identity.

The fix: create the Agent per-request. It is lightweight -- the expensive resources (model client, tool list, system prompt text) are module-level singletons that the Agent references. Creating an Agent object is just assembling a configuration struct with pointers to shared resources.

Get this boundary wrong and you either break observability (module-level Agent) or kill performance (module-level initialization on every request).

- MCPClient needs ExitStack to survive the stream.

The MCPClient is a context manager that must stay alive for the entire streaming duration. Tool calls happen mid-stream -- the model generates text, decides to call a gateway tool, the MCPClient routes it, the response comes back, the model continues generating. If the MCPClient is closed before streaming finishes, gateway tool calls fail silently.

The pattern that works:

with contextlib.ExitStack() as stack:

if mcp_client:

stack.enter_context(mcp_client)

gateway_tools = mcp_client.list_tools_sync()

agent = Agent(model=model, tools=ALL_TOOLS + gateway_tools)

async for event in agent.stream_async(query):

yield format_sse(event)

The ExitStack keeps the MCPClient open until streaming completes and all tool calls have resolved.

- User ID caching at the microVM level.

My agent resolves email addresses to numeric MySQL user IDs on every request. The first request requires a database query through the SSH tunnel. Subsequent requests for the same user hit a simple dictionary cache that survives the 15-minute warm pool.

_user_id_cache: dict[str, str] = {}

def _resolve_mysql_user_id(email: str) -> str:

if email in _user_id_cache:

return _user_id_cache[email]

# ... DB lookup ...

_user_id_cache[email] = uid

return uid

One database lookup per user per container lifetime instead of one per request. In a typical session with 5 to 10 messages, this saves 4 to 9 database round-trips through the SSH tunnel. Small detail, meaningful latency improvement -- especially because the SSH tunnel itself has nontrivial overhead on its first connection (~300ms for secrets fetch and handshake).

The broader principle: module-level state in AgentCore is not ephemeral. The microVM warm pool means your module-level singletons (model client, SSH tunnel, ID cache) survive across requests for 15 minutes. Design for this. Put initialization work at module level and let the warm pool amortize the cost.

Where AgentCore Excels (and Where It Does Not)

I am enthusiastic about AgentCore. I also want you to know what to watch for.

Where it excels:

Full streaming control. You see every event: text chunks as they generate, tool invocations as they happen, completion metadata with timing. Your frontend can show “Looking up your profile...” during tool execution instead of a generic spinner. I was surprised by how much this changed the perceived performance. Even when total response time was similar, users felt the agent was faster because they could see it working. Visible progress beats invisible processing.

Python-native tools. The @tool decorator eliminates the Lambda-per-tool-set pattern. Eleven tools in my agent are eleven functions in a few Python files. Adding a tool means writing a function. Changing a tool description means editing a docstring.

Prompt caching. One parameter -- cache_prompt="default" -- reduces system prompt costs by roughly 90% for active users. The 5-minute TTL aligns naturally with the microVM warm pool.

Gateway as multi-client tool server. The same MCP endpoint that serves your agent also serves Claude Desktop, Cursor, and any other MCP client. Build once, expose everywhere, with Cedar policy enforcement on every call.

Where it does not (yet):

OIDC discovery is a hard gate. If your auth system does not expose /.well-known/openid-configuration, AgentCore Identity will not accept your tokens. There is no workaround at the infrastructure level -- you either add the endpoint, build a broker, or skip Identity and validate tokens in code.

Observability has too many silent failure modes. The sampling rate, the gRPC port, the IAM permissions, the indexing lag -- each is individually simple to fix, but finding which one is wrong requires systematic elimination. When traces silently disappear, you do not get an error message telling you why.

Python only. The Strands SDK is Python. If your team works in TypeScript or Go, the migration includes a language switch. That is not a small cost.

Rougher documentation. AgentCore is newer than Bedrock Agents, and the documentation reflects that. You will discover behavior through experimentation that should have been documented. The requestHeaderAllowlist, the gRPC-only OTEL port, the sampling rate defaults -- all learned the hard way.

No built-in traffic splitting. There is no native A/B testing or canary deployment between agent versions. If you want to run old and new agents in parallel and gradually shift traffic, you build that yourself with feature flags and API Gateway routing. During our migration, we ran both systems against the same DynamoDB table and used a feature flag to route traffic. It worked, but it was our code, not AgentCore’s feature.

Getting Started This Weekend

You do not need all six services to start. Here is the shortest path to a working agent deployed on AgentCore.

Step 1: Install the SDK.

pip install strands-agents bedrock-agentcore boto3

Step 2: Write your agent. One file. One tool. One system prompt. Use the entrypoint pattern from earlier in this article. Test locally with python agent.py -- Strands agents run locally against Bedrock without AgentCore infrastructure.

Step 3: Create agentcore.json. Start with the minimal runtime configuration:

{

"runtimes": [{

"name": "MyAgent",

"build": "Container",

"codeLocation": "agent/",

"runtimeVersion": "PYTHON_3_13",

"networkMode": "PUBLIC",

"protocol": "HTTP",

"modelProvider": "Bedrock",

"instrumentation": { "enableOtel": true }

}]

}

Step 4: Write your Dockerfile. The one shown earlier in this article works. ARM64 base, install deps, opentelemetry-instrument python agent.py.

Step 5: Deploy.

agentcore deploy -y

That is it. Your agent is running on managed microVMs with auto-scaling and OTEL observability.

You do not need Identity, Gateway, Cedar, or Memory to start. Add them as you need them. The phased approach that worked for me:

Phase 1: All native tools, no Gateway, no Identity. Get the agent working and streaming correctly.

Phase 2: Extract stateless tools to Gateway. Add Cedar in LOG_ONLY mode. Enable Identity if your auth supports OIDC.

Phase 3: Enforce Cedar policies. Add Memory if session management is a bottleneck. Explore multi-agent patterns.

Each phase is independently deployable and independently valuable. Do not try to adopt all six services at once. The power of AgentCore’s modular design is that you can add services incrementally as your agent’s needs grow.

One thing I wish I had done differently: I would have set up observability (the three-part OTEL config) from Day 1, even during Phase 1. Debugging an agent without traces is slow. Debugging an agent with session-grouped traces showing tool timing and model latency is fast. The setup cost is three lines of configuration. The debugging cost without it is hours.

What This Means

I spent weeks working around the limitations of opaque agent orchestration. I optimized prompts for a 4,000-character ceiling. I built workarounds for invisible tool call latency. I accepted that debugging meant reading CloudWatch logs of a black box.

Then I spent a week writing Python and had something better. Modestly faster response times, yes -- but more importantly, visible tool execution, a system prompt as long as it needed to be, and an agent I could debug with a breakpoint.

AgentCore is not perfect. The documentation has gaps. The observability setup has traps. The OIDC requirement will block some teams. These are real costs.

But the core proposition is sound: write your agent as a Python program, and let the platform handle compute, scaling, identity, observability, and tool routing. The complexity you take on is the complexity of your agent logic. The complexity you delegate is the complexity of running it in production.

The best agent platform is the one that gets out of your way and lets you write code. For me, AgentCore can be that platform. Your mileage will vary -- your auth stack, your team’s Python comfort, your tolerance for documentation gaps will all factor in.

But the patterns in this guide should help you evaluate AgentCore honestly and, if you choose it, avoid the hours I lost learning them. Start with one tool, one system prompt, and agentcore deploy -y. You can always add complexity later. You cannot easily remove it.

Build the agent you wish you had.

Further Reading

Five resources I would point someone at who is starting with AgentCore today:

- Amazon Bedrock AgentCore Developer Guide -- the official docs. Thorough but scattered. Start with the “What is Bedrock AgentCore” overview, then jump to the specific service you need (Runtime, Gateway, Identity, Memory, Observability). Your canonical reference once you know what you are looking for.

- AgentCore Starter Toolkit Quickstart -- the fastest way to get a running agent. Zero to deployed in under five minutes using the AgentCore CLI’s scaffolder. Useful even if you plan to migrate an existing agent, because it shows you the minimal file layout AgentCore expects.

- Strands Agents SDK -- the framework you will actually write your agent in. Covers the

@tooldecorator,BedrockModel,stream_async(), and multi-agent patterns. If you only read one non-AWS reference, make it this one. - awslabs/amazon-bedrock-agentcore-samples -- AWS’s official samples repo. End-to-end applications, feature demos, IaC templates. Faster to study than the docs when you need to see how the pieces fit together in working code.

- re:Invent 2025 AIM3310: Agents in the enterprise: Best practices with Amazon Bedrock AgentCore -- AWS PMs Kosti Vasilakakis and Maira Ladeira Tanke on moving agents from POC to production. Nine concrete practices including “observability from day one” and “deterministic code for calculations.” The production wisdom I wish I had before I started.